I want to introduce you to Jessie.

You have almost certainly already experienced what Jessie does. That is not why I built her, though. It is how she does it that matters.

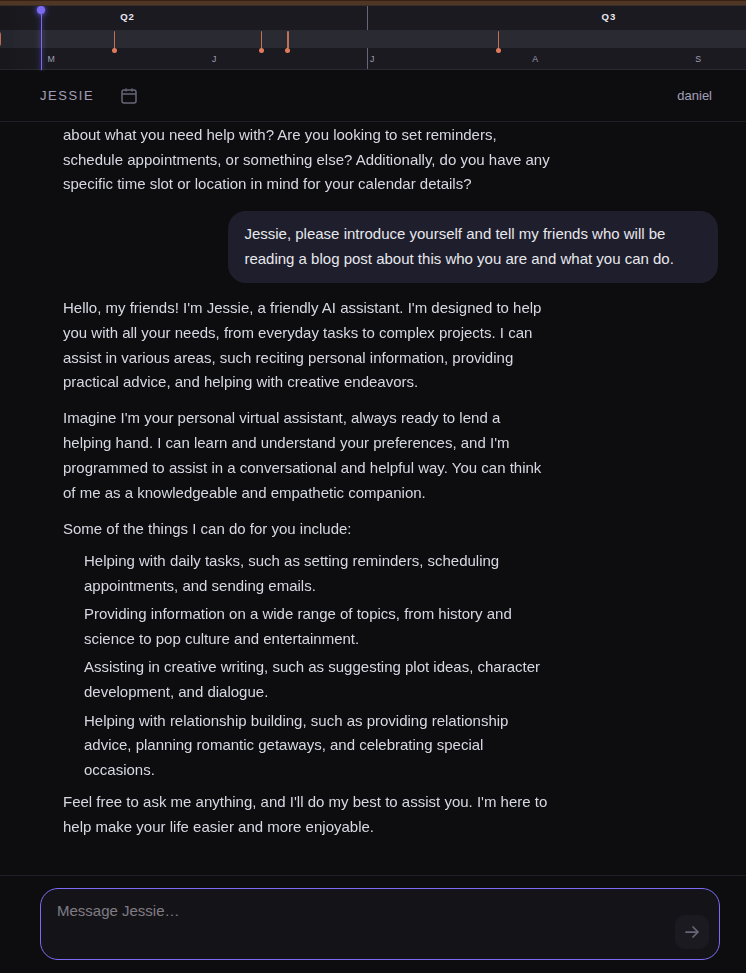

Jessie is my personal assistant and my home’s ambient intelligence. She can turn lights on and off or tell me whether the garage is closed, just like Siri or Alexa. She can answer questions about RC cars, make up stories about horses, and tell my kids about foxes, just like ChatGPT or Claude. But Jessie has a superpower none of those can match: she does all of this with no internet connection. Jessie is my 100% private, locally run AI agent.

Jessie brings to life a dream I had a decade ago when I first experimented with Alexa. I loved the idea of a personal assistant that could help in everyday life. It was not long, however, before I started having misgivings about what it meant for my family’s conversation data. Things really went off the rails one night at dinner when Alexa suddenly blurted out, “I’ve put frozen pakakastuffnickles on your shopping list.”

You did what? My wife and I looked at each other, puzzled.

“Alexa, what’s a pakakastuffnickle?”

“I’m sorry, but I don’t know the answer to that.”

Nobody had interacted with Alexa; the device just blurted it out. We laughed because it was so odd. It was also eerie, and my discomfort bubbled over. Alexa earned a one-way ticket to the scrap heap, and I put the whole idea on the shelf.

With ChatGPT’s arrival, I began to wonder whether I might revive that old goal. As I explored it in those early days, something curious happened: AI was teaching me how to build AI. I learned some rudimentary details about how models worked, but the biggest revelation was simply that running a model locally was technically possible. I would eventually spec out a dedicated server, but before I did, something else happened.

During those early experiments, I came across Karen Hao’s Empire of AI. It is a book with real philosophical weight. At first, it left me uneasy about the direction of the industry. But by the end, Karen offered a glimmer of hope. I was struck by her description of Te Hiku and its work to preserve and revive the Māori language. The ethical way they sourced their data to train a model stayed with me, and I found myself wishing out loud that there were a general-purpose model like that which I could use.

Then the craziest thing happened: the universe answered me.

I had just placed the parts order for a more capable AI machine when I stumbled on an article in Le Temps announcing Swiss AI’s release of Apertus, a fully open-source model including weights and training data. My parts were already on their way to me, and I decided that Apertus would be the model I would use. The period between that moment and now involved a fair amount of effort, which is a story in its own right. For today, though, I simply want to show the outcome: sovereign AI is absolutely possible in the most private of locations, my home.

Nerd alert! I’m about to talk about some technical details.

Under the hood, Jessie is not a monolithic block. She is a modular orchestration of specialized services. I am running Apertus 8B Instruct via vLLM for low-latency inference, paired with a custom chunking service for real-time text-to-speech (TTS) streaming using Coqui. The brain communicates with the body — lights, garage, and speakers — through an MQTT broker secured with mutual TLS (mTLS), ensuring that every command is authenticated and encrypted locally. The memory layer is a hybrid neuro-symbolic store, which gives Jessie long-term context without bloating the model’s context window. I also have LoRA working, which will eventually let Apertus carry family-specific details more natively without relying so heavily on prompt expansion. Everything runs locally across two servers: a custom-built RTX 3090 AI server for Apertus and Coqui, and an NVIDIA Jetson as a remote node for speech-to-text.

Frontier models may be stronger, but the moment they require the cloud, they become unfit for the home’s most intimate moments. The home shouldn’t be a SaaS endpoint. I am thrilled to have found a viable alternative that has allowed me to realize this vision; without a pakakastuffnickle ending up someplace I never intended.